The rise of Artificial Intelligence has been heralded as the most transformative technological leap since the internet itself. From powering personalized medicine and optimizing global supply chains to generating creative content and automating complex industries, AI promises a future of unprecedented efficiency and human capability. We talk about AI in terms of algorithms, models, and breakthroughs—abstract concepts of pure digital intelligence. But beneath the dazzling veneer of code and computation lies a rapidly expanding, profoundly physical reality: the data center.

AI is not just software; it is a massive, energy-hungry industrial undertaking. Every query you ask, every image generated, and every complex calculation performed by a large language model (LLM) requires immense computational power, which, in turn, demands colossal amounts of electricity and generates staggering amounts of waste heat. This hidden infrastructure—the sprawling farms of servers, the cooling towers, and the power grids they tap into—is fundamentally reshaping the global tech landscape, presenting a critical challenge that is as much about thermodynamics and resource management as it is about machine learning.

We are at an inflection point. The exponential growth of AI is creating a physical footprint that demands immediate, global attention. Understanding this footprint—the energy consumption, the thermal load, and the material demands—is crucial for ensuring that the promise of AI can be realized sustainably, without compromising the planet’s resources.

The Data Center Boom: Building the Digital Brain

To grasp the scale of AI’s physical footprint, one must first understand the data center itself. These facilities are not merely rows of blinking lights; they are highly specialized, climate-controlled industrial complexes designed to house the world’s most powerful computing hardware. They are the physical embodiment of the digital economy.

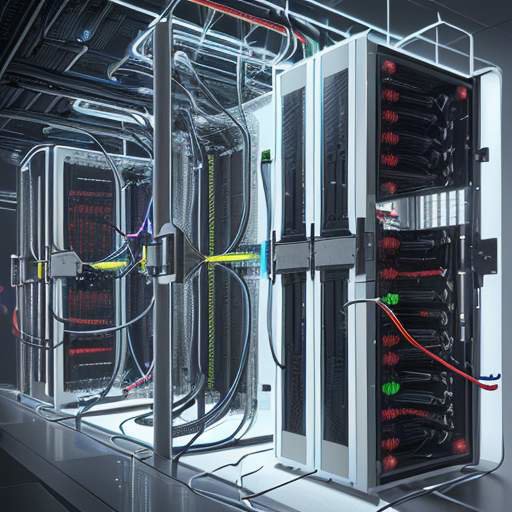

The core components driving this boom are specialized accelerators, most notably Graphics Processing Units (GPUs) and custom AI chips (like TPUs). These chips are designed for parallel processing—the ability to perform thousands of calculations simultaneously—which is the mathematical backbone of modern deep learning. As models become larger (moving from billions to trillions of parameters) and the tasks they perform become more complex (multimodal reasoning, real-time video processing), the computational density required skyrockets.

A single, cutting-edge AI training cluster can contain thousands of high-end GPUs, each drawing significant power. These clusters are packed so tightly that they represent a monumental concentration of heat and electricity. The sheer density of this hardware means that data centers are becoming the most power-intensive buildings on Earth. They require dedicated, robust power feeds, often necessitating the construction of new substations and transmission lines.

The global race to build the next generation of AI infrastructure—the "AI arms race"—is directly translating into a race for real estate, power access, and specialized cooling technology. This boom is geographically uneven, concentrating massive infrastructure investments in regions with reliable, affordable power, creating both economic hubs and potential resource bottlenecks.

The Energy Consumption Crisis: Powering the Intelligence

The most immediate and pressing concern surrounding AI’s physical footprint is its staggering energy consumption. Training and running large AI models are not trivial tasks; they are energy behemoths.

When we discuss the energy cost, we must differentiate between two phases: Training and Inference.

Training: This is the initial, resource-intensive phase where the model learns from massive datasets. Training a state-of-the-art LLM can consume the energy equivalent of hundreds of homes’ annual usage. This process requires running trillions of calculations over weeks or months, pushing hardware to its absolute limits. The energy draw is immense, and the computational cycles are the primary cost driver.

Inference: This is the phase where the model is actually used—when you ask a question, or when an AI generates an image. While a single query uses far less energy than the initial training, the sheer volume of inference requests globally is projected to grow exponentially. As AI becomes embedded into every consumer device, every smart appliance, and every business workflow, the cumulative energy demand of inference will become the dominant, persistent load.

The energy source matters profoundly. If the electricity powering these data centers comes from fossil fuels, the AI revolution is inextricably linked to increased carbon emissions. The carbon footprint of AI is not just a metric; it is a global climate challenge. It forces a direct confrontation between technological progress and planetary sustainability.

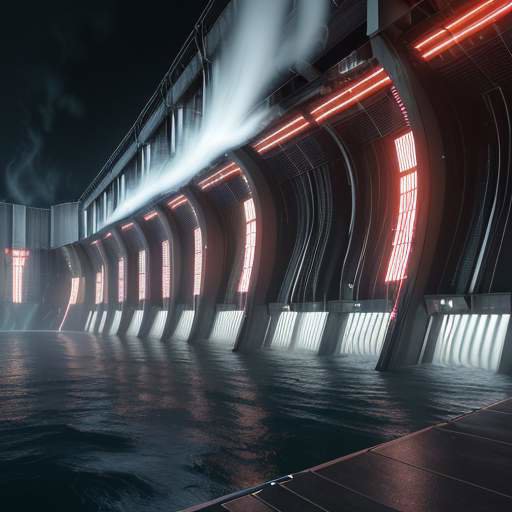

The Thermal Challenge: Managing Waste Heat

If energy is the fuel, heat is the byproduct. The second major physical challenge posed by AI is thermal management. Every transistor that performs a calculation generates waste heat. In a dense cluster of thousands of high-powered GPUs, this heat accumulation is not negligible; it is a critical engineering problem.

Data centers must maintain incredibly precise temperature and humidity levels to ensure the longevity and peak performance of their hardware. If the cooling systems fail or are overwhelmed, the entire operation grinds to a halt.

Historically, cooling has relied on massive Computer Room Air Conditioning (CRAC) units, which circulate chilled air through the racks. While effective, this method is energy-intensive itself, requiring enormous amounts of electricity to run chillers and pumps. The sheer volume of heat being dumped into the atmosphere—often through cooling towers—is a significant environmental concern, contributing to local thermal pollution.

The industry is therefore undergoing a radical shift in cooling technology. The focus is moving away from simply removing heat to reusing it.

Liquid Cooling: This is the most significant technological pivot. Instead of relying solely on air, advanced data centers are increasingly adopting direct-to-chip liquid cooling or even immersion cooling. In immersion cooling, the entire server stack is submerged in a non-conductive, specialized dielectric fluid. This method is vastly more efficient than air cooling because liquids transfer heat thousands of times more effectively than air. It allows data centers to pack more compute power into a smaller physical space while managing the thermal load with unprecedented efficiency.

The Path to Green AI: Solutions and Mitigation Strategies

The narrative surrounding AI’s footprint cannot remain one of pure consumption. The industry, academia, and policymakers are recognizing that sustainability must be integrated into the core design principles of AI itself. This movement is giving rise to "Green AI."

Green AI is not just about building bigger, more efficient data centers; it is about making AI smarter and less wasteful in its operation.

1. Algorithmic Efficiency: The most impactful changes are happening at the software level. Researchers are developing techniques like model quantization, pruning, and knowledge distillation.

- Quantization: Reducing the precision of the numbers used in calculations (e.g., from 32-bit floating point to 8-bit integers) drastically reduces the memory and computational power needed with minimal loss of accuracy.

- Pruning: Identifying and removing the least important connections (weights) within a neural network, making the model smaller and faster without sacrificing core intelligence.

- Distillation: Training a smaller, simpler "student" model to mimic the performance of a massive, complex "teacher" model.

These methods allow powerful AI capabilities to be run on less powerful, less energy-intensive hardware, making AI more accessible and sustainable.

2. Renewable Integration and Grid Optimization: Data center operators are increasingly prioritizing locations near abundant, reliable renewable energy sources—geothermal, hydro, and solar. Furthermore, they are implementing sophisticated power management systems that can dynamically shift computational loads to times when renewable energy generation is highest (e.g., running intensive training cycles at night when solar power is peaking).

3. Waste Heat Reuse (The Circular Economy Approach): This is perhaps the most revolutionary concept. Instead of treating waste heat as a problem to be dumped, it is treated as a valuable resource. Advanced data centers are now being designed to capture this thermal energy and redirect it. This captured heat can be used for:

- District Heating: Providing warmth to nearby residential or commercial buildings.

- Agriculture: Heating greenhouses or aquaculture facilities, creating a true circular energy loop.

This shift transforms the data center from a mere energy sink into a potential energy source for the local community.

Policy, Policy, and the Future of Compute

The physical footprint of AI cannot be solved by technology alone; it requires policy intervention. Governments and international bodies must establish clear standards for energy efficiency and carbon accountability in the tech sector.

We need:

- Mandatory Energy Reporting: Requiring major AI developers to publicly report their energy consumption and carbon emissions, similar to how other heavy industries are regulated.

- Incentivizing Green Location: Offering tax breaks or subsidies for data centers that commit to 100% renewable energy sourcing and waste heat reuse.

- Standardizing Efficiency Metrics: Developing universal metrics for "AI efficiency" that account for both computational power and energy consumed per task, moving beyond simple FLOPS (Floating Point Operations Per Second) measurements.

The transition to a sustainable AI future requires a fundamental shift in mindset: viewing computational power not as an infinite resource, but as a finite, precious commodity that must be managed with the same care as water or rare earth minerals.

Conclusion: Balancing Progress with Planet

The story of AI is a story of immense potential, but it is also a story of profound physical responsibility. The dazzling promise of artificial intelligence—the ability to solve climate change, cure diseases, and unlock new frontiers of human knowledge—is currently tethered to a physical reality of massive energy consumption, intense heat generation, and resource strain.

The industry is at a critical juncture. The solutions are emerging—from liquid immersion cooling and algorithmic pruning to waste heat recapture and deep integration with renewable grids. These innovations prove that the path forward is not a choice between intelligence and sustainability, but rather a mandate to achieve both simultaneously.

For AI to fulfill its promise to humanity, it must first prove its stewardship of the planet. The next chapter of the AI revolution will not be written solely in lines of code, but in the sustainable engineering of its physical infrastructure. The true measure of AI’s intelligence will ultimately be its ability to operate within the finite, beautiful constraints of our planet.