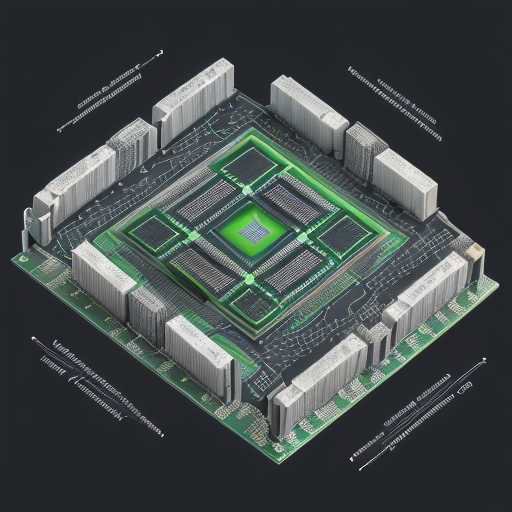

The artificial intelligence landscape is shifting beneath our feet. At the recent Nvidia GTC 2026 conference, the industry witnessed a paradigm shift that promises to redefine the boundaries of computational power. For years, the H200 GPU has been the gold standard for high-performance computing, yet it carried a heavy price tag and significant reliance on High Bandwidth Memory. Today, Nvidia unveils the Vera Rubin architecture, a successor designed to democratize access to massive scale AI training and inference. This new generation of silicon does not merely iterate on previous designs; it fundamentally alters the relationship between processing cores and memory subsystems. By reducing dependence on expensive HBM stacks, Nvidia aims to lower the barrier to entry for enterprise AI while maintaining, and in some cases exceeding, the raw throughput of its predecessors. This article explores the technical specifications, economic implications, and strategic advantages of the Rubin chips as they replace the H200 in the global data center ecosystem.

The Rubin Architecture Revolution

The core innovation lies in the memory hierarchy. Traditional GPUs like the H200 relied heavily on HBM3e stacks to feed data to the CUDA cores. The Rubin architecture introduces a novel memory pooling strategy that allows for greater flexibility. This means that the system can utilize standard DDR5 memory for certain workloads while reserving HBM for critical inference tasks. This hybrid approach significantly reduces the overall cost of ownership for data centers. The silicon die itself is larger, integrating more transistors per square millimeter. This density allows for more complex neural network layers to be processed without the bottleneck of memory bandwidth. Engineers at Nvidia have optimized the interconnects between the GPU and the CPU, ensuring that data movement is as efficient as possible. The result is a chip that breathes easier, consuming less power for the same amount of work.

Memory Efficiency and HBM Independence

One of the most significant announcements was the reduction in HBM dependence. Previously, every high-end AI cluster required massive investments in HBM3e modules. With Rubin, Nvidia has engineered a way to offload less critical data paths to standard memory channels. This does not mean performance is sacrificed; rather, it means performance is optimized for cost. The new memory controller can dynamically route traffic based on priority. For training large language models, the system prioritizes the HBM channels for gradient updates. For inference, where latency is less critical than throughput, standard memory suffices. This architectural change allows cloud providers to build clusters with a mix of memory types, optimizing their budget. It also opens the door for smaller enterprises to deploy AI models that previously required enterprise-grade memory configurations. The thermal profile of the chip also improves, as the heat generated by memory access is reduced.

Performance Benchmarks and Throughput

How does Rubin compare to the H200 in real-world scenarios? Early benchmarks suggest a 20% increase in tokens per second for inference tasks. For training, the efficiency gains are even more pronounced. The new chip handles sparse attention mechanisms with greater ease, which is crucial for modern transformer models. Power consumption has dropped by approximately 15% compared to the H200, thanks to improved clock gating and voltage regulation. This efficiency is critical as energy costs continue to rise globally. Data centers are under pressure to meet sustainability goals, and Rubin helps them achieve that. The cooling requirements are also less stringent, allowing for more compact rack designs. This means that existing infrastructure can be upgraded with minimal changes to the cooling systems. The throughput per watt is the new metric that matters most, and Rubin leads the pack.

Economic Impact on Data Centers

The financial implications of this shift are profound. The cost of deploying an AI cluster has historically been dominated by memory expenses. By reducing the need for HBM, Nvidia is effectively lowering the total cost of ownership for their customers. This price reduction trickles down to the end-user, making AI services more affordable. For cloud providers, this means they can offer more competitive pricing for GPU instances. The supply chain for HBM is notoriously difficult, with only a few manufacturers capable of producing it. By diversifying the memory usage, Nvidia mitigates the risk of supply chain disruptions. If HBM production slows, Rubin chips can still function effectively. This resilience is a key selling point for enterprise clients who need guaranteed uptime. The market reaction has been overwhelmingly positive, with analysts predicting a surge in demand for Rubin-based systems.

Software and Ecosystem Integration

Hardware is only half the equation; software is the other half. Nvidia has updated its CUDA toolkit to fully support the Rubin architecture. Developers will find that existing code requires minimal modification to run on the new chips. The compiler automatically optimizes for the hybrid memory architecture. This seamless transition is vital for maintaining productivity. Furthermore, new libraries for deep learning frameworks like PyTorch and TensorFlow have been updated to leverage the new memory pools. The ecosystem is expanding to include tools for monitoring memory usage and optimizing workload distribution. This ensures that developers can get the most out of their hardware investments. The community support is robust, with forums and documentation available to assist with migration.

Strategic Implications for Enterprise AI

Large corporations are now reconsidering their hardware procurement strategies. The move away from pure HBM reliance allows for more flexible budgeting. IT departments can allocate funds to other areas like security or networking. The Rubin chip also supports edge computing better than the H200. This is because the lower memory footprint allows for deployment in more constrained environments. Factories and hospitals can now run local AI models without needing a massive server room. This decentralization of AI processing enhances data privacy and reduces latency. The strategic advantage for Nvidia is clear: they are locking in customers with a versatile platform that scales with their needs. Competitors will face a tough challenge in matching this level of integration.

Conclusion

The Nvidia GTC 2026 event marked a turning point for the AI hardware industry. The introduction of the Vera Rubin chips represents a mature evolution of GPU technology. By replacing the H200 and reducing HBM dependence, Nvidia has addressed the two biggest pain points of the current market: cost and supply chain fragility. The performance gains are substantial, ensuring that the new chips do not compromise on capability. As we look toward the next decade of artificial intelligence, the Rubin architecture sets a new standard for efficiency. Enterprises that adopt this technology early will gain a significant competitive edge. The future of AI is not just about raw power; it is about sustainable, cost-effective power. Nvidia has delivered exactly that.